Building trust in context

This week, I had the pleasure of meeting Hilary Sutcliffe - as part of the Vincent Fairfax Fellowship. We had an opportunity to discuss her work on Taking Trust Seriously.

Let me start by capturing a few of Sutcliffe's most interesting points:

Trust is an outcome, not a behaviour

Trust is the belief that an expectation will be fulfilled - so ultimately, trust is a feature of the relationship between your behaviour and the expectations of one doing the trusting. These expectations can be narrow (I trust the cab driver to take a reasonable route) or broad (society’s trust in a regulator, for example).

Trust can't be pursued directly, but it can be pursued indirectly (so trust measures don't make good metrics)

Trusting first makes you more trustworthy. Distrusting creates a transactional environment which makes trust harder for everyone.

Trust is a messy interpersonal thing (a bit more like decision-making under uncertainty)

Sutcliffe has also attempted to synthesize a collection of what she calls “signals of trustworthiness”

There are many things to like about this list. For example, I like the modesty of Sutcliffe’s description of trustworthy Intent - being “not totally selfish”, rather than some ideal of altruism that is somehow completely selfless.

I also like the inclusion of the 8th element here - Evidence of Trustworthiness. After all, if trust is ultimately the belief an expectation will be fulfilled, and these signals are effectively the kinds of expectations in question, there needs to be some evidence to justify the belief.

However, as with many positive descriptions of virtue, these signals raise as many questions as they answer - for example, what counts as “taking seriously others’ concerns, views and rights” in order to signal respect?

So, my first intuition is to add to Sutcliffe’s model how we might fail to embody these signals of trustworthiness. A few examples:

Competence - When do we fail to deliver competently? When do we knowingly set expectations we can never meet?

Fairness - What sort of unfairness are we most liable to exhibit? Which governance processes are most susceptible to “disruption by exception” by more powerful stakeholders?

Integrity - When do we avoid accountability? When do we feel tempted to obfuscate? When do we feel least confident in our own judgement of our performance/behaviour, and crave external feedback?

and so on.

This isn’t an original move. Any good UX practitioner will remind you that “usability is not a feature” because it’s not a positive attribute - and what great UX people and processes most of the time is remove barriers to usability. Interestingly, usability - and the more general accessibility - is about identifying a mismatch between (makers’) expectations and (users’) actual capabilities, needs and behaviours, and one of the first principles of improving usability is trusting the intuitions of the user of the system - the user is never to be treated as the broken component of the human-computer system.

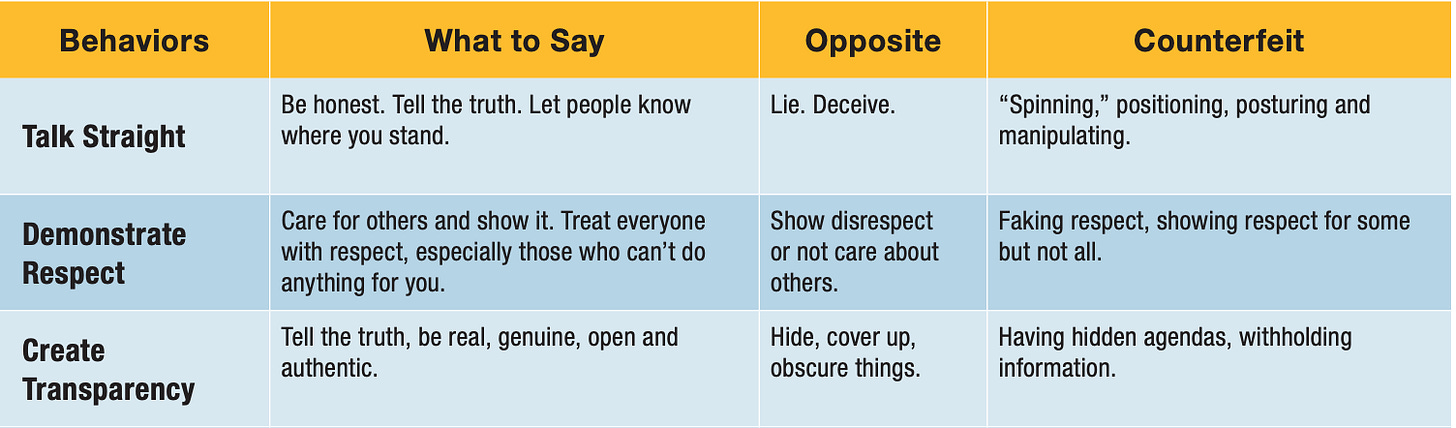

Another precursor to this is Stephen Covey’s very clever use of both Opposites and Counterfeits* to clarify his view of the 13 most important trust behaviours. Here are a few examples:

The Opposite of “Talking Straight” in his model is malicious: lying or deceiving. By contrast, Counterfeits are, in my opinion, all good examples of what you might call “Ethical Bullshit” (after Harry Frankfurt), where the counterfeiter mimics the posture of the trust behaviour without any real intention to deserve trust.

Manageable trust; becoming decent

The second intuition I have is to point out that Sutcliffe’s 7 signals of trustworthiness can easily become overwhelming. Any attempt to try to spell out the behaviours implied in each of those signals will spawn a very large list of behaviours, all of which have some sort of merit. Which of these should our team prioritise? Which can we ignore without undermining trust, or acting hypocritically?

I think the reason this becomes overwhelming is because trust behaviours are being described in isolation from the rest of the responsibilities that inform a teams behaviour. After all, while every team depends on trust to do their job, there is no team who’s job it is simply to build trust. (And if there were such a team, wouldn’t we be a little suspicious of their real purpose? - which, I guess, is another way of agreeing with Sutcliffe that we can’t build trust directly)

Cashing out the dimensions or signals of trust like Sutcliffe does is certainly useful, but it does have the side-effect of encouraging idealistic thinking. When we start by describing what trust is, we seem to end up with an unmanageable responsibility.

I have in mind the excellent work of Todd May, consulting philosopher on The Good Place and author of A Decent Life: Morality for the Rest of Us. Here’s a beautiful passage from that book:

If it is difficult for us to live up to the kinds of moral demands placed upon us by the extreme altruists of practice or moral theory, it is even more difficult—perhaps impossible—for us to live up entirely to the sense we have of who we are. This need not be a deep source of worry to us. If we are willing to admit this to ourselves, then we do the best we can to figure out who we are and go on from there. (189-190)

This humble, reflective ambition to fulfill our moral responsibilities a little better each day is what May means by decency.

What I like about May’s work is that it recognizes that people may be, on the whole, morally mediocre, but also recognizes that they have other things to do apart from becoming morally perfect - as he puts it, we all have lives to lead, and a world of morally perfect beings would be rather boring (as The Good Place explores). May also recognizes that moral exemplars - saints, both religious and secular - aren’t always motivating. In the language of Liz Wizeman’s multipliers, sometimes they act as accidental diminishers, at times even inadvertently excusing bad behaviour because the expectation to live up such a role model is so unreasonable.

One of May’s (tongue-in-cheek) Nine Rules for Moral Decency that Should Be Followed Strictly and Without Exception is “Enjoy reading philosophy, even when it advises you to be better than you can reasonably be.” Sutcliffe’s signals of trust risks being philosophical in this sense.

Starting from where we are

So what to do with these signals of trust? Well, this is where describing the Field of Responsibility will be useful. If we can map out the responsibilities (to ourselves, to each other, and to others) that already motivate our behaviour in our current context, we can — as May puts — “figure out who [and where] we are, and go on from there”.

Once we have a shared view of how Motives, Evidence, Rules and Missions influence our behaviour, we can then reasonably ask ourselves how trust is enabled or impeded in our local world. For example, we can ask:

Which of our missions - if we acted on them uncritically - would undermine our trustworthiness?

Where do we find ourselves compensating for a lack of trust through rigid rules, micromanagement or burdensome transparency?

How would a high trust environment be different?

How might we help each other to avoid damaging trust?

This is a good point to introduce the four “dimensions” or “levers” in the centre of the diagram above, because together they represent the collective sphere of control, within which a team can attempt to modify that field of responsibility in some way.

Goal - a commitment that the team makes to each other

Craft - the expectations the team shares internally about what good and bad practice look like

Role - the shared understanding of what the team’s job is, and how the different team members roles should contribute to that

Place - the interfaces, resources and artefacts whose affordances make easier or harder certain sorts of behaviours

A team that is transparent with itself about its field of responsibility, and how its own ethos is informed by that context is a team that can modify that ethos to, for example, earn greater trust.

Contrast this with a team that tries to consider trust in isolation from this rich, implicit normative landscape. Perhaps you can start to see how the team that understands its own field of responsibility might have a better chance of making those critical “moments of trust” an explicit part of their experience, and, hopefully, make their behaviour more trustworthy over time.

* I’ve heard Counterfeit trust behaviours referred to as Shadow behaviours, and though I can’t find the source, I like the imagery of that a little better than Counterfeits, because it’s more morally neutral. Often, we indulge in bullshit trust behaviours, not out of any sociopathic desire to undermine the signals of trust, but simply because we don’t have the time or resources to embody them properly. Sometimes those shadow behaviours are effectively incentivised within our context. Recognizing those incentives (within the field of responsibility) is a first step towards removing or mitigating them.

I love feedback. I do this to make connections, and prompt richer conversations. So if this piece triggered a thought for you, whether it was positive or negative, related or completely tangential, please don’t hesitate to leave a comment.

And if this is your first time here, please sign up to get a new post every couple of weeks.